The set-up presented below can also be found on the GitHub account of Never Code Alone, since the topic of open source is a central concern for the author ‒ after all, it means giving more than taking [1]. The aim of the article and the technologies presented here is to create an automated deployment flow that can also be executed by the product owner via mobile phone from a sunny park, leaving more time for other tasks. This way, not only us programmers will be happier, but software will also be developed more sustainably.

From Maven to GitLab

This article doesn’t want to go too far back in history, but there was already a perceived difference between Java Enterprise development and the PHP script kiddies. Yes, our dear programming language is still marked by prejudices and has an elephant as its logo. One very important difference is that Java must be compiled, and PHP just runs like that. In the context of our article, this means that Java developers have always been concerned with automated processes, while their PHP colleagues somehow bring their projects live. In the beginning, there was Maven, which then became Jenkins or was spun off. The countless offshoots, CodeShip for example, should only be mentioned here. In the following, we will take a closer look at GitLab, but other deployment technologies are of course also possible. The differences are particularly noticeable in the number of available plug-ins, but this should not play a role in our Symfony and e-commerce projects.

IPC NEWSLETTER

All news about PHP and web development

There’s no way back ‒ what’s now will never be undone

Even though Wolfsheim is a terrible band [Ed. the author relates to Wolfsheim ‒ Kein Zurück], there’s a lot of truth in song lyrics ‒ and it’s very much about IT. If we look at what exactly we want to bring live, it’s ‒ with the increasing age of the applications – only a few individual files: vendor updates. Usually, not much happens on the database side. In reality, it’s new content elements and, of course, bug fixes. Sometimes only a link or a headline must be removed. But no matter how much code there is that needs to be brought live, it happens all the time ‒ especially in teams. And even if it’s not live, it always must be brought to staging environments. In practice, this is very often done manually on servers, where a connection is made via SSH, a git pull is executed and then the cache is manually cleared. This is usually followed by a quick, cursory sign-off before doing the same thing live. Bugs that arise during changes are only found much later in manual processes and usually these processes are not even designed for a rollback ‒ and if they are, then with the status from last night. So here, there is only forward developing. As a result, a lot of deployments happen very quickly, certainly not just every fortnight, at the time when they were scheduled. Software must be rolled out constantly and quickly: So, automating this very process makes perfect sense. And it’s not as difficult as you might think.

Stages adapt and let us grow overall

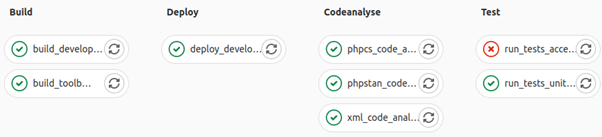

We have already described the simplest use case above: Git pull plus clear cache. This exists in almost every PHP application and in the e-commerce and CMS sector. Automating this small step alone makes sense and makes life easier. This can be done with GitHooks and sh scripts, GitLab is nothing else – but it’s much more comfortable. Here you can also manage merge requests well in teams, for instance, carrying out code reviews and adding comments. By the way, merge requests are a very reliable process for rollbacks, without any additional infrastructure. As is typical for an enterprise tool, access permissions and much more can also be managed here. Deployments are closely related to tests. This leads to static code analysis after the new version has been rolled out. Tools such as PHPStan, Rector, PSALM, XML Linter, CSS validators and many more are extremely practical for this. In the next step, you come to the PHPUnit tests and can ensure 100% that everything really works as it should with a few good E2E tests. The entire process is made transparent, documented, reusable, and can be easily executed by anyone via GitLab. GitLab stages and jobs – complex deployments can be easily mapped, as Listing 1 also shows [2].

stages:

- build

- deploy

- codeanalyse

- test

- posttest

- rollout

- postrollout

...

# Stage: test

phpstan_code_analyses_against_dev_environment:

stage: codeanalyse

image: $TOOLBOX_IMAGE

script:

- /var/www/html/vendor/bin/phpstan analyse src -c phpstan.neon

tags:

- docker-executor

except:

- master

phpcs_code_analyses_against_dev_environment:

stage: codeanalyse

image: $TOOLBOX_IMAGE

script:

- /var/www/html/vendor/bin/phpcs

tags:

- docker-executor

except:

- master

xml_code_analyses_against_dev_environment:

stage: codeanalyse

image: $TOOLBOX_IMAGE

script:

- /var/www/html/vendor/bin/xmllint config -v

tags:

- docker-executor

except:

- master

run_tests_unit_against_dev_environment:

stage: test

image: $TOOLBOX_IMAGE

script:

- export SYMFONY_DEPRECATIONS_HELPER=disabled codecept run

- /var/www/html/vendor/bin/codecept run unit --html -vvv

artifacts:

when: always

paths:

- ./tests/_output/*

expire_in: 1 week

tags:

- docker-executor

except:

- master

...

The current .gitlab-ci.yml file of the open-source GitHub repo in the Sulu CMS project by Never Code Alone has a total of 274 lines. We, and especially the author, believe in the philosophy of open source. We take a lot and in return, we give everything. All our knowledge about tests, deployments and environments is in this project. In fact, any developer can extend things here. Of course, the project is not yet complete and the final deployment steps are more complex. But it is very easy to define stages and assign the corresponding jobs. Figure 1 shows an example of an NCA pipeline for a feature branch.

Fig. 1: NCA pipeline of a feature branch

This is the feature branch fix/date-in-events. The E2E codeception tests fail because the environment at the URL simply no longer exists. Impressively, the example shows just how effective a simple frontend test can be in contrast to all other quality gates. However, a direct comparison is not possible here. Code tests are much more important for the backend and the state of the application. In the code example, there is a list of stages at the top, which represent the columns here. Below that, the jobs are defined. Each job is assigned to a stage; we will come to the missing ones in more detail later. What’s important here is how easy it is to add another stage, for example with a static code analysis. This also applies to the Build Stage – this is a pure Docker deployment, but commands or scripts can be executed individually. There is also no significant difference to Maven or Jenkins. However, environment variables are handled differently within the respective deployment technology.

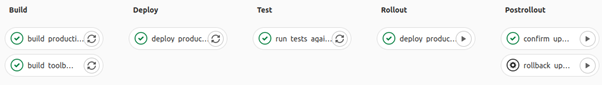

Fig. 2: It can be this clear

This article is primarily intended to encourage people to continue the path of Continuous Integration. It is simply wonderful when things happen in a push that otherwise would be done manually and, unfortunately, often in a very concentrated way. The flow tests and deploys each branch to its own subdomain based on the current live data of the database and files. Stage is a special feature here: it is not a branch, but the state before the application is “deployed”. It is simply a subdomain that points to the exact upcoming version and carries out a very small part of the E2E tests. It is just a little more than “home page goes”. After that, the actual changeover and a confirm upgrade take place. Once that is done, there is no going back. Then, among other things, the backup container infrastructure is deleted, otherwise the rollback would be carried out.

Deployment and rollout from anywhere

“The customer won’t pay for it.” ‒ this excuse justifies everything about legacy code: our poor working conditions, headaches and, in the end, our mental health. There is little joy there and above all, we have no time for the appreciative work we are proud of. That is why the author would like to give a personal insight into how GitLab can improve life: On the one hand, I am from Duisburg and call the Pott my home. Here, the kiosk has real high culture. Secondly, I founded Never Code Alone four and a half years ago after a nervous breakdown and have been campaigning for occupational health and safety in IT ever since.

Since then, I’ve also been in the home office. Leaving the office, strolling through the park to the kiosk, getting a glimpse of real-life outside IT over a coffee in the sun ‒ that’s always a highlight for me. And then a GitLab pipeline came into my life. Not something like Deployer, but a tool and set-up that’s just perfect. I can finish developing and pushing something in place, then I go to the kiosk and get a coffee from the booth. I sit in the park and have a green pipeline. That means absolutely everything is working. I like to take the opportunity to look at the result again on my phone. From here, I can merge with GitLab via mobile view and when I’m back home it’s live. In truth though, there’s no reason to go home anymore, I can just stay out. Real quality of life.

The NCA Deployment Flow as an Open Source Project

We all need much more time for value-adding work. Deployment processes are extremely important, but unfortunately, they’re also extremely boring. Small mistakes can have devastating consequences. Everyone who has to deal with it is probably familiar with this. The goal is for these processes to be fast and reliable, everything else costs time and nerves. Of course, the process depicted here is very complex and sophisticated; with a simple workflow, you can only display files that have been merged onto the master ‒ better, main – on Stage and then bring them live. Once you start with automation, you receive a lot of time in return. You can put that time back into innovations ‒ that’s exactly why I became a programmer. To write something that just does all the things. So please don’t be a user.

One reason for choosing a technology is to find a solution to a current problem. In doing so, you also always pursue a larger, long-term, and sustainable goal. Deploying faster is the first step ‒ we want to be able to roll out reliable software permanently. Unfortunately, a faster process is not the solution to our problems, which are mainly bugs and the challenge of keeping all dependencies up to date. We need time for this important work. When senior developers are charged for half days of work for rollouts, it borders on fraud and money-making. But we don’t want to sell hours anymore, the dotcom crisis is over. We have full order books, want to produce much more and not be stuck in the legacy swamp. But we can only get out of this swamp if we drain it ‒ and do so sustainably. This requires discipline and up-to-date know-how. We need time and motivation for this because creative work can only be done with passion. And you can’t force that.

Conclusion

The whole pipeline as a demo is also available as a YouTube tutorial [3]. We no longer deploy files, but have complex build processes in the frontend and backend. In addition, there are quality gates and numerous processes that simply must be triggered via the command line. And that all takes time. Here, 15 minutes is fast. 2 749 GitLab pipelines have now run at Never Code Alone ‒ honestly, that’s impossible to do manually. Unless you put in overtime and huge frustration when you must start all over again for every little change. The result is that you take shortcuts. And those make things unreliable and don’t let us sleep. On the other hand, a pipeline is a clear competitive advantage. If you deploy faster, you get billed faster and can produce much more efficiently. You don’t need your own admin resources for that. All the knowledge is known in the open source community. More than 50% of PHP developers at conferences rely completely on automated processes and are happy with them. Pipelines create more time for value-added work and bring us a better life.

Links & Literature

[1] https://github.com/nevercodealone

[2] https://github.com/nevercodealone/cms-symfony-sulu/blob/master/.gitlab-ci.yml