But what is the problem with testing? Not in testing itself, but in the perception that testing can be done quickly and at short notice. However, professional quality management encompasses much more than just testing. It starts at the very beginning of the project, and over its duration provides answers to questions such as the coverage of planned tests, the progress of the project, the number of known defects, and much more.

IPC NEWSLETTER

All news about PHP and web development

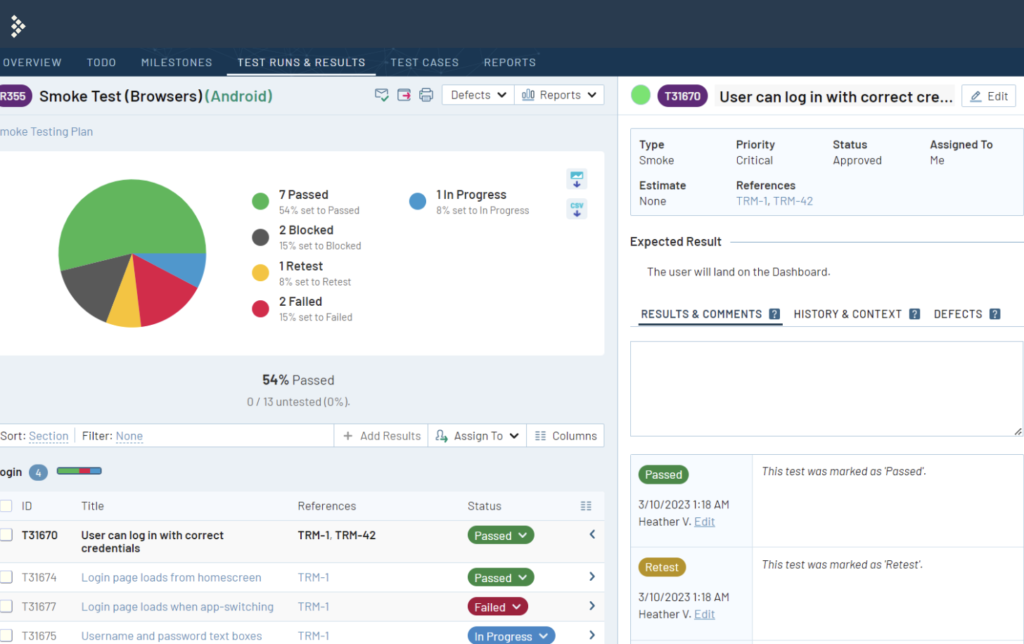

Tools are available to us for exactly these tasks, so-called test management applications. In this article, we will take a look at the application “TestRail”, and learn what possibilities such software offers us, and how we can use it.

However, before we get into the details, it is important to consider what is actually meant by the term “testing” and what tasks are associated with it.

What does professional testing mean?

What does testing actually mean? According to the guidelines of the ISTQB (International Software Testing Qualifications Board), testing includes:

The process consisting of all lifecycle activities (both static and dynamic) that deal with planning, preparation, and evaluation of a software product and associated deliverables.

This definition is undoubtedly based on a broad focus on all activities, which means that testing encompasses much more than simply running tests.

If we take a closer look at the start of a new project, it is common knowledge that project management, technical lead devs and other stakeholders work with customers and stakeholders to create project plans, divide them into work packages and release them for development. What is often neglected, however, is the role of testers in this crucial planning phase of the project.

In the area of quality management or quality assurance, one or more test concepts are developed in the professional approach at the beginning of the project. These test concepts sometimes deal with seemingly simple questions, which, however, play a central role in the development of test cases.

What are the goals of our testing? Do we want to build trust in the software, or just minimize risks? Evaluate conformance, or simply prove the impact of defects? What documents do we create for our tests? What forms the basis of our tests (concepts, specifications, instructions, functions of the predecessor software)? Which test environments are available, when will they be implemented, and which approaches and methods do we use to develop test cases?

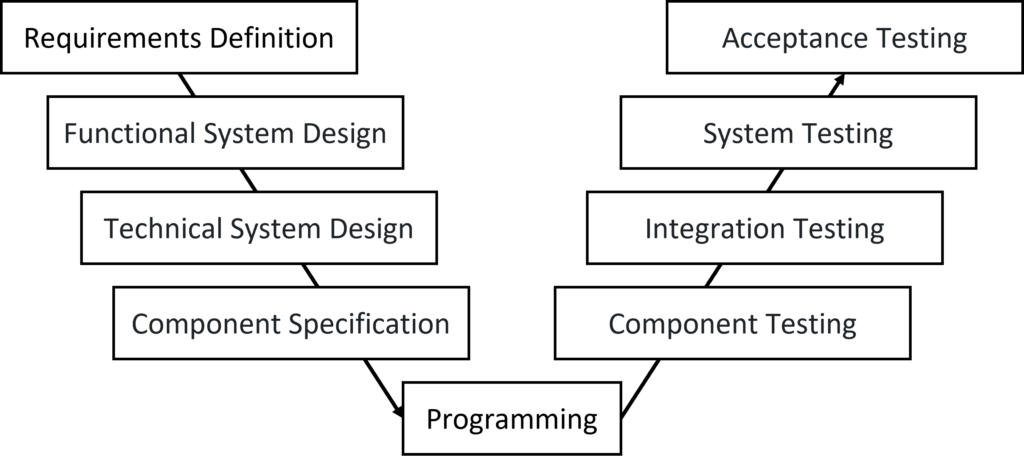

For those who have now had their “aha” moment, it should be added that such test concepts can indeed be elaborated for each test level of the V-Modell. For example, in the area of component testing, we usually strive for things like unit tests, code coverage and whitebox testing, while in system testing, blackbox testing methods are increasingly used for test case development (equivalence classes, decision tables, etc.). In addition, system testing may already be validating instead of just verifying things. >Validation deals with making sense of the result (does the feature really solve the problem), while verification refers to checking requirements (does it work according to the requirement).

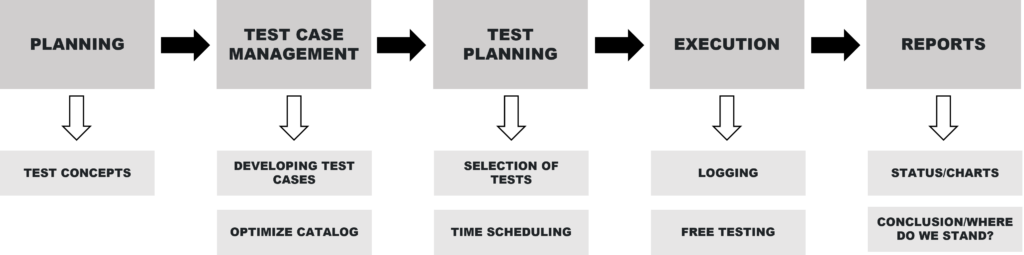

Due to the considerable amount of information and the work steps according to ISTQB (yes, that was by far not all), I would like to divide these, into four simple areas:

- Test Case Management

- Test Planning

- Test execution

- Final reports

This clear structure makes it possible to manage the complexity of the testing process and to ensure that all necessary steps are carried out carefully.

Testing in a Software Project

To facilitate the later use of TestRail, let’s now take a rough look at the flow of a project, using the points simplified above.

After the test base (requirements, concepts, screenshots, etc.) has been defined, various test concepts have been generated, and appropriate kick-off meetings have taken place, it is the responsibility of the testers to develop appropriate test cases. These tests essentially provide step-by-step guidance on how to perform them, whether on a purely written or even visual basis.

Those who have done this before know that there are few templates and limitations in this regard. These range from simple functional tests, such as technical API queries, to extensive end-to-end scenarios, such as a complete checkout process in an e-commerce system, including payment (in test mode).

A key factor in test design is recognizing that quantity does not necessarily mean quality. It makes little sense to have 1000 tests that cannot possibly be run manually over and over again due to scarce capacity. It makes much more sense to create fewer tests, but with a large number of implicit tests so that they automatically test additional peripheral aspects of the actual case, if possible.

Now that a list of tests has been created, it is of course useful if it can be filtered. Therefore, the carefully compiled test catalog is additionally categorized. The so-called “Smoke & Sanity” tests comprise a small number of tests that are so critical that they should be tested with every release. Simple regression tests, in turn, provide an overview of optionally testable scenarios that can be rerun as needed (suspected sideeffects, etc.).

The list of these categories can vary, as there is no official standard and they can vary from company to company. Ultimately, the most important thing is the ability to easily filter based on requirements. Of course, there are many other interesting filtering options, such as a reference Jira ticket ID for the Epic covered in the test, or possibly specific areas of the software such as “Account”, the “Checkout” or the “Listing” in e-commerce projects.

Now that the test catalog has been generated, the question is whether we should directly test it in full. The answer is yes and no! Here it depends on what is crucial for the project management and the stakeholders, i.e. what kind of report they ultimately need.

Therefore, we can create test plans that include either all tests, or only a subset of them. Usually, for example, before a release for a plugin (typically with semantic versioning v1.x.y, …) all “smoke & sanity” tests are tested, as well as some selected tests for new features and old features. Although it would of course be ideal to run all tests, this is unfortunately often unrealistic, depending on team size and time pressure. A relaunch project that is created from scratch should of course be fully tested before final acceptance. However, for a more economical way of working (shift-left), it is possible to plan various test plans for the already completed areas of the software earlier. Thus, tests for the “account” area of an online store could be started before the “checkout” area is testable. This gives an earlier result and also provides a cheaper way to fix bugs (the earlier in development the cheaper). However, this is still a gamble, as side effects could still occur due to integration errors at the end of the project. Thus, additional testing at the end is always advisable.

Planning test executions thus involves selecting and compiling tests from our test catalog, taking into account various factors such as their importance, significance, priority and feasibility.

After the test plans have been created, and the work packages have been put into a testable state, now the perhaps simplest, but extremely prominent step in the QA process starts – the execution of the tests. This step can be quite straightforward, depending on the quality of the prepared tests, but it always requires a step-by-step approach. (A small tip: in addition to running these tests, freer and exploratory testing is also recommended to uncover additional paths and bugs).

During test execution, however, it is critical to log results as accurately as possible. This includes capturing information such as screen sizes, devices used, browsers used, taking screenshots and recording the ticket ID of the work package, and more. Such logging is necessary for tracking and makes troubleshooting much easier for developers.

After the tests have been run, it’s time to create the final reports. Stakeholders and other involved parties naturally want to know what the status of the project is. Among other things, they are interested in the test coverage, the number of critical issues found, and whether they might suggest a premature go-live of the application. The creation of reports is therefore an essential step in the QA process, as they form the basis for decisions and consequences for the entire project.

Fortunately, in order not to lose track of all these tasks, tools and applications are available. Although in theory simple documents based on Word and Excel can suffice, professional test management applications provide a much more efficient and organized workspace for the entire team.

A leading tool in this field is “TestRail”.

Test Management with TestRail

TestRail, developed by Frankfurt-based Gurock Software, is characterized by its specialization in highly efficient and comprehensive solutions for QA teams. Its offerings range from comprehensive test management capabilities to the creation of detailed test plans, precise execution of tests, meticulous logging and extensive reporting. And for those who want to go even further, TestRail offers an extensive API that can be used to develop custom integrations to further customize and optimize the QA process.

When visiting the TestRail website, it quickly becomes clear that there is more than just software on offer here. TestRail’s content team continuously publishes interesting articles on the subject of testing, which offer real added value thanks to their practical and technically appealing content.

TestRail itself can be used either as a cloud solution or via an on-premise installation. The cloud variant offers a comprehensive solution at quite affordable prices, around EUR 380 per user per year. For those who want additional functions, the Enterprise Cloud version is available for around EUR 780 per user per year. This includes single sign-on, extended access rights, version control of tests and much more.

IPC NEWSLETTER

All news about PHP and web development

The installation on own servers is more expensive, about 7,700 EUR to 15,620 EUR per year, but already includes a large contingent of available users and can be a suitable solution especially for larger teams and companies.

Once you have chosen a version, such as the cloud solution, it can be used after a short registration.

Create a project

Let’s start by creating a new project in TestRail. In addition to the project title and access rights, there are settings related to Defects and References, which will be discussed in more detail later in this article. Through these two functions, it is possible to link applications such as Jira, with TestRail and get a smooth navigation, as well as a preview of linked Defect tickets or even Epic tickets (references).

Probably the most interesting and important area concerns the type of project we are creating. Here, TestRail offers us three different options for structuring our test catalog.

The user-friendly “Single Repository” option allows us to create a simple and flexible test catalog that can be divided into sections and subsections.

The “Single Repository with Baseline Support” option allows us to keep the simplicity of the first model, but create different branches and versions of test cases. This is especially useful for teams that need to test different product versions simultaneously.

The third variant offers the possibility to use different test catalogs to organize the tests. Test catalogs can be used for functional areas or modules of the application. This type of project is more suitable for teams that need a stricter division of the different areas. A consequence of this is that test executions can only ever include tests from a single test catalog.

For our project launch and greater flexibility, we choose the “Single Repository” type.

Create tests

After the project is created, we are taken to an overview page. Here, at a later stage of the project phase, we will find more useful information.

Now it is time to create our first test. To do this, we open the “Test Cases” section in the project navigation.

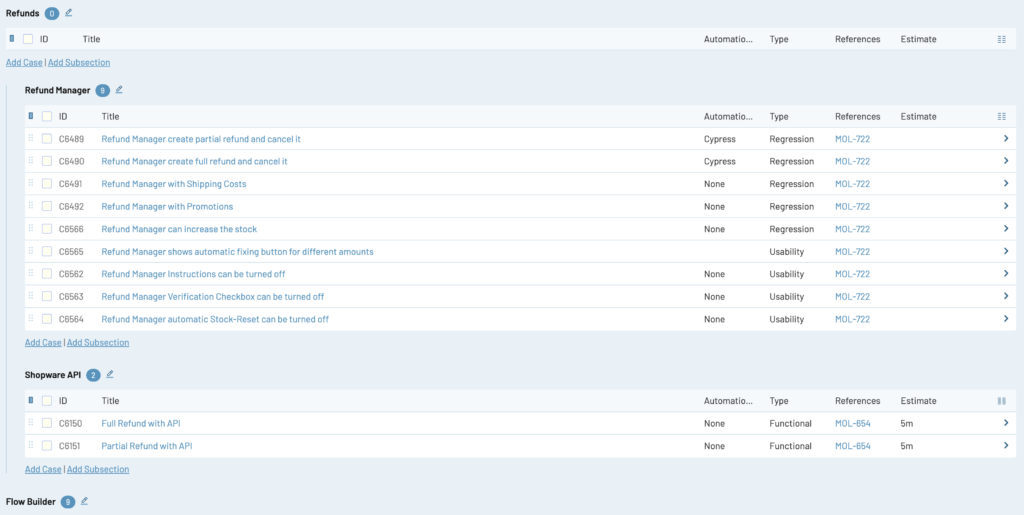

On this page we see the currently still empty test catalog. Our task now is to create an appropriate number of tests that are optimally structured and filterable for us.

TestRail offers a variety of options for organizing test cases. In addition to filterable properties, we can also create a hierarchical structure by using sections. There are no hard and fast rules on how this should be done.

We can use sections for different areas of the application like “Checkout” or “Account”, or create them for individual features. The author often finds it helpful to use sections to break down the application by area or feature, as these can be used later as a guide when creating test plans.

Regardless of whether we decide to use sections or not, the next step is to create our first test.

Looking at the input screen, we notice that a lot of emphasis has been placed on relevant information here.

We have the option to define various properties, such as the type of test (smoke, regression, etc.), priority, automation type and much more. If these options are not enough, we can easily create and add new fields through the administration.

When we define the instructions of a test, we have the option to use one of several templates. Besides the variant with a free text field, we also have a template for step-by-step instructions. With the latter, we can define any number of steps with sequences and expected intermediate results. This not only offers the advantage of clear instructions, but also allows us to specify exact results for each step. This way, we can later immediately see from which step an error occurred.

For testers managing large projects, there is also the option of outsourcing certain steps to separate central tests, such as the “login process on a website”, and then reusing them in different tests.

Thanks to the extensive editing options for tests in TestRail, there are no limitations when it comes to defining test cases efficiently and precisely.

Today we learned about the different processes of a testing team in a software project, and started using TestRail to set up our project.

With the tests we created together and the resulting filterable test catalog, we now have a perfect basis to plan the actual testing of our application.

In the next part we will use this test catalog to create test plans as well as to execute the tests.

We will also take a look at reporting, traceability, and Cypress integrations via the available TestRail API to complete our flow.